The Diagnosis Gap

Why brands keep buying campaigns when what they actually need is someone who can read their own data from the inside

by Manny V - noētico lab

Picture this; it’s the casual Tuesday morning review call. The kind that starts at 9am and goes sideways by 9:12.

The slides were immaculate. Colour-coded by channel. Three shades of green for performance, a small amber warning on Meta CPMs, a confident line chart trending upward. The Head of Growth presented with the quiet authority of someone who had spent the weekend building this deck. Everyone nodded. The founder made a note. The agency account manager unmuted briefly to confirm the ROAS figures.

Then the CFO asked:”So why is our net margin down for the fourth consecutive month?”

The room went quiet in the particular way rooms go quiet when a question exposes the gap between the story being told and the business being run.

At this point, nobody lied or was incompetent. The numbers on the slides were real. The problem was something more uncomfortable, and it was that nobody in that room actually knew what was causing the margin to erode. The founder, the marketing lead, the agency, nobody could tell the issue by the slides, because nobody had done the work of diagnosis. They had done the work of reporting. These are not the same thing. They never were. But for a long time, the difference did not matter as much.

Now, we reached at that point that it does, more than ever. And most brands are not ready for it.

The gap nobody is naming

There is a word that the marketing industry systematically avoids: diagnosis.

We have audits, reviews, quarterly business reports, attribution dashboards, media mix models, brand trackers, monthly performance decks. We have more data than any generation of marketers before us. And yet the leaders I talk to, the founders, CMOs, growth leads, investors overseeing portfolio companies, all tell me versions of the same story:

we have all this information and we still do not know what to actually do.

And so they do the only thing they can do at that point: at the last minute, before the board meeting or the quarterly review, they cobble together a rough sketch of simplistic insights from everything they have accumulated. If they’re fairly lucky the data is not wrong, but they never built the interpretive infrastructure to make it mean anything. The sketch looks like analysis. It is not analysis. It is panic dressed in PowerPoint, with some sparks of sudden clarity by chance.

This is not a measurement problem or a data problem, it’s not even a strategy problem, in the conventional sense.

It is clearly a diagnosis gap.

A diagnosis gap is what happens when an organisation can measure almost everything and understand almost nothing. When the distance between the numbers they collect and the decisions they need to make keeps widening, filled with reports that produce anxiety rather than clarity, meetings that produce consensus rather than truth, and campaigns that produce activity rather than growth.

The philosopher Byung-Chul Han has argued that data, in its raw accumulation, resembles pornography more than it resembles knowledge. Because it stimulates without revealing, and it presents without meaning. Just like adult films, it produces the sensation of understanding while leaving the underlying reality untouched. That framing is uncomfortable precisely because it is accurate. Most marketing data stacks are architectures of stimulation. They show shockingly everything at once. They need to explain nothing.

To understand why, it helps to name the distinction precisely.

An audit is an inventory. It tells you what you have: which channels are active, what the metrics show, how this quarter compares to last. Audits are necessary, but they are not sufficient on their own. Most marketing engagements begin and end with something that looks like an audit; a QBR, a performance review, a mid-year strategy session, and call it strategic thinking.

A diagnosis is a causal map. It asks not what the numbers show, but why they show it, and specifically, what single structural constraint, if removed, would most change the system’s output. Diagnosis requires knowing the difference between a metric that is a symptom and a metric that is a cause. It requires being inside the organisation long enough to understand not just the data, but the decisions the data is producing. And it requires the willingness to name something uncomfortable: that the constraint is often not where the company has been looking.

The confusion between correlation and causation sits at the centre of this failure. Most teams, when they identify a performance problem, reach for the most visible lever: budget. If conversion is down, spend more. If ROAS is declining, increase the bid strategy. If brand awareness is low, add a channel. The organisations I work with most often arrive after having cycled through this loop: more budget requested, more budget allocated, more metrics produced, the same underlying constraint intact. They can’t take it anymore that additional spend does not fix a structural problem, but it just finances it.

Most brands have been buying audits and calling them diagnoses for years. The difference does not show up in the reporting. It shows up on the P&L.

Three patterns I keep finding in client rooms

I have spent the last decade inside the machine. At Ogilvy, Mindshare, OMD, and inside smaller, more specialised shops focused on digital lead generation and e-commerce performance. Then embedded directly as CMO and growth consultant inside companies from luxury to iGaming to SaaS. I have built the dashboards. I know what they actually show. More importantly, I know what they are not able to tell.

Three patterns appear in almost every organisation where growth has stalled.

Pattern one: Dashboard Theatre.

The dashboard exists. It is updated regularly. It is reviewed in a meeting. It does not change what anyone decides.

I once worked with a brand that had a Looker Studio dashboard connected to every marketing channel, GA4, their CMS, and their email platform. It was genuinely impressive. It had been built over six months by a data analyst. Leadership opened it every Monday.

When I asked the founder/client: “What is the last decision this data actually changed? Not an action, a decision?” he paused for a long time. Then said, slowly: “I don’t think it ever has contributed to anything other than validating what we already knew about competitors’ seasonal budgets. We look at it to confirm all those patterns we already knew, and occasionally to explain away some mishandling by the junior at the agency we hired.”

Dashboard Theatre is often perceived as a trauma response to burnout or even laziness, but honestly it is what happens when measurement is decoupled from decision-making. The numbers become stage props, reassuring evidence that the company is paying attention, rather than instruments that change behaviour. Every report they spit out, with colour-coded charts, gets filed so that their red metrics and KPIs can be easily targeted. And growth keeps stalling.

Pattern two: The Panic Cadence.

You will recognise this one immediately.

Sales are down on Wednesday. Someone escalates to the Head of Growth. The Head of Growth schedules an emergency call with the agency. The agency, looks the last 14day data from a single source and recommends allocating budget from high CPA low conversion volume campaigns to those with high or increasing ROAS, and testing, of course, some new creatives. The creative goes live on Friday. By Monday, the numbers look better. By the following Wednesday, they are down again.

The Panic Cadence is a five-step loop:

observe a metric drop → escalate → prescribe a tactical change → see a temporary recovery → forget the pattern → repeat.

It is the organisational equivalent of pain medication: it treats the symptom, masks the signal, and leaves the underlying condition completely untouched.

The Panic Cadence is self-reinforcing because it feels like responsiveness. The company looks like it has got some control because some decisions are being made. Something is always being tested. What is not being done is thinking structurally about why the numbers keep cycling, what constraint sits underneath the weekly variance, and what the business would look like if the loop were interrupted rather than managed.

Pattern three: The Strategy–Execution Rift.

Every company I have worked with has strategy documents. Maybe some positioning papers with brand guidelines. Definitely some Go-to-market plans, even more annual objectives with OKRs attached.

And in almost every case, those documents bear almost no resemblance to what the marketing team actually does on a Tuesday. Even worse, the team can sense these objectives were set in thin air.

Let’s call it the Strategy-Shaped Output Funnel. The top of the funnel looks like strategy: purpose, values, brand platform, target audiences, key messages. The bottom looks like execution: Meta campaigns, email sequences, landing pages, influencer briefs.

But the middle, that precious middle which is the connective tissue that would translate strategic choices into executional decisions, is usually either missing or was written by a different person, in a different quarter, on the basis of different assumptions.

The result is a brand that says one thing and does another. Campaigns that run from heuristics, on instinct, rather than strategy. A performance team optimising for conversion while the brand team briefs for premium perception, and all these happen in the same week, to the same audience, with contradictory signals.

Why the gap widened

These three patterns are not new. But something happened in the last five to seven years that made them significantly harder to close.

Three forces converged.

First: Attribution chaos

iOS 14.5, GDPR, the deprecation of third-party cookies, and the algorithmic black-boxing of every major ad platform created a measurement environment in which it became structurally difficult to know what was working. Platform-reported ROAS became increasingly disconnected from finance-reported profitability. The signal got noisy. Leaders responded by adding more dashboards, more metrics, more reports, which made the gap between measurement and understanding wider, not narrower.

Second: The rise of the template economy

The marketing services industry responded to the attribution chaos and the data deluge described above by commoditising its outputs. Side-hustling marketers with enormous following body, deliver daily best practices, playbooks, frameworks, and so forth. The same 90-day launch plan, the same creative testing methodology, the same brand architecture template, all reproduced across thousands of clients, none of whom have exactly the same context, constraint, or organisation. Templates are efficient, but only when they manage to signal the need for deep dive to issues. Otherwise, they become the enemy of genuine diagnosis. A template is a solution looking for the right problem. Diagnosis is the reverse.

Third: The strategic leadership vacuum

Mid-market companies are generally those companies with seven-figure revenues, teams of 10 to 50 people, real marketing budgets and genuine growth ambitions. These companies systematically lack access to senior strategic thinking. They cannot afford a full-time CMO. They hire agencies who are structured to execute, not to diagnose. They use fractional operators who show up for a few hours a month and give advice from the outside. And so the Diagnosis Gap grows, quietly, week by week, quarterly review by quarterly review, until the CFO asks the wrong question on a Tuesday morning.

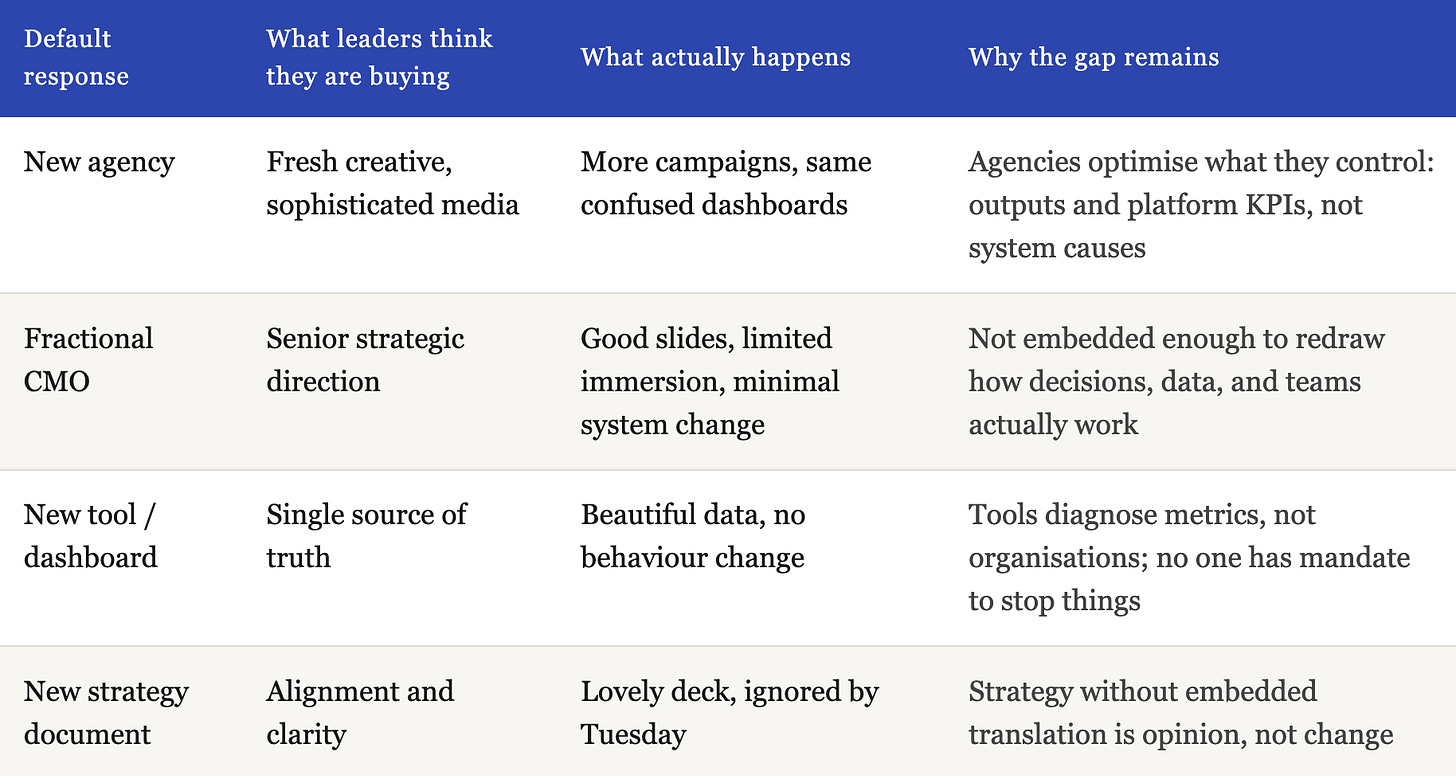

The wrong responses

Here is what makes the Diagnosis Gap particularly resistant to being closed: the default responses to growth pain are structurally incapable of addressing it.

The new agency hire.

This is the most common response to stalled growth. Because they bring in fresh creative, they pitch a more sophisticated media buying, and usually a different approach to audiences. Agencies are, by architecture, optimisation machines, and what they are wired to optimise for is just a production throughput. Complex client situations get absorbed into pre-built production lines: the creative brief template, the media plan template, the monthly reporting cadence. And almost always, the recommendation at the end of the first review is to adjust the budget upward. The campaigns run uninterrupted until it’s time to report them. Metrics improve on certain dimensions. And in the underlying constraint, you can still see the misaligned strategy, the broken measurement system, the organisational pattern that keeps producing the same outcomes. These go completely untouched. Agencies are structured to produce outputs. They are not structured, incentivised, or positioned to diagnose the system those outputs operate within. Although their leaders sound often like they do “because they’ve seen this pattern in other clients as well” - clients with the same issues, though.

The fractional CMO.

This is the more sophisticated response, and it is closer to what is needed. A senior brain, part-time, providing strategic direction. The problem is one of immersion, and immersion is not merely about hours in the building. It is about proximity to the texture of the organisation. To close the diagnosis gap, you need to be close enough to smell the detergent used for the floor before everyone arrives. The person who cleans the office at 7am, if you talk to them, will tell you something about the culture of an organisation that no performance dashboard ever will. If there are processes to their supplies, for example. Causality craves that kind of nuance. The fractional CMO who appears on a Tuesday for two hours and sends a Loom with strategic recommendations cannot access it. What they access is the curated version of the organisation, the version leadership has prepared for external consumption.

And there is a symmetric problem on the other side. Senior executives who have been inside the same organisation for years develop deep, unconscious routines. They stop seeing what is actually happening behind the moats they have built around their own assumptions. They need the diagnostic intelligence of an outsider, but not one who is so outside the system that they cannot read its actual signals. The embedded model is the only position that resolves this tension: close enough to hear the truth, detached enough to see it clearly.

The new tool or dashboard.

This is the most seductive response because it feels like solving the measurement problem. Better attribution models is something big, more sophisticated BI. Even a unified data warehouse. These investments are not wrong, but they address Layer 4 of the problem (platform metrics and data infrastructure) when the constraint is at Layer 1 (does the business understand what is actually driving its growth or blocking it). A better dashboard tells you more things you do not know what to do with. Diagnosis tells you the one thing you need to act on next.

The pattern across all four responses is the same: they sit outside the system they are trying to change. They address a layer - creative, strategy, measurement, governance - without ever getting close enough to the organisation’s actual operating reality to see what is causing the growth to stall.

What real diagnosis looks like

Diagnosis is not that complicated. It is just uncomfortable, slow, and almost impossible to do from the outside.

It requires four things that the marketing service industry has largely stopped providing.

It requires immersion.

You cannot diagnose a system you are not inside. The patterns that matter, how decisions are actually made, which metrics trigger anxiety and which trigger action, what the real relationship between the brand team and the performance team looks like on a Wednesday afternoon, which agency claims go unchallenged and which are interrogated. None of these are visible in a brief, a pitch, a monthly review call, or a strategy deck. They are only visible when you are in the room. Week after week.

The embedded approach is not a preference. It is an epistemological requirement. You cannot see a system clearly while standing outside its constraints.

It requires causal thinking, not descriptive thinking.

Most marketing analysis is descriptive: here is what happened, here is how it compared to last week, here is the breakdown by channel and creative and audience. Description is necessary, but it is not sufficient. Diagnosis asks a different question: why did this happen, and more specifically, what single structural thing, if changed, would most improve the system’s output?

By structural, I do not mean a campaign setting, a budget line, or a creative direction. I mean something in the way the organisation itself is constituted. A process, a misalignment between teams, an unexamined assumption about the customer, a relationship with a supplier or a platform, that constrains the system’s output regardless of how well the campaigns are executed. Structural constraints, just like those extreme temperatures resistant bacteria, are the ones that survive every agency review and every quarterly plan. They are the ones that nobody names because naming them would require someone to own them.

Eliyahu Goldratt called this the constraint: the one bottleneck in any system through which all throughput must pass, and which therefore determines the system’s overall performance. Finding the constraint is not about analysing every part of the funnel equally. That would be pure waste of resources. It is about mapping where the system bleeds most, relative to its potential, and attacking that specific point. And this particular point is not the easiest to address, not the one that the agency is most comfortable so as to pin it in the weekly performance report. These are things that just validate why the monthly fee is there on time.

A concrete example. A multi-brand luxury fashion retailer was bringing a recurring complaint to every agency review: campaign structure. The conversation was always about audience targeting, bid strategy, creative refresh. Revenue was almost entirely dependent on when their partner brands launched seasonal drops or activations. And what their paid media campaigns were actually doing, examined closely, was harvesting the demand those partner brands had already generated through their own equity and marketing. At a low ROAS. And in doing so, they were quietly creating an antagonistic relationship with the very brands they depended on: the partners could see in the data that this retailer was bidding against them on their own brand terms, extracting value from their investment rather than building something of their own.

Nobody in the weekly campaign review was asking why. Because the constraint was not visible inside the campaign and the reported KPIs. It was visible only from inside the organisation’s commercial relationships and positioning logic.

The diagnosis surfaced it after some months of observation and endless discussions. The retailer had no independent reason for a customer to choose them, no curatorial voice, no fashion perspective, no occasion-based advice. They were a distribution channel pretending to be a brand. After building genuine customer personas around fashion intention rather than product preference, and shifting communication toward curation and direct style advice, something unexpected happened alongside the performance shift: their physical stores started getting busier. Customers who had only ever found them through a brand’s sponsored result were returning because they now had a reason to.

The campaign structure had been irrelevant the entire time.

It requires organisational thinking, not just analytical thinking

Two companies with identical funnel metrics obviously need different interventions, because their organisations are different. Their decision-making styles are different. Their “soft” elements, meaning how leadership sets direction, what the team actually believes in, how they handle internal conflict and strategic disagreement, they shape which interventions will be adopted and which will die in the implementation gap.

The McKinsey 7S framework, introduced by Peters and Waterman in 1982, remains one of the most accurate maps of why good strategies fail in practice: it’s because the structure, the systems, the shared values, the skills, the style, or the staff are misaligned with what the strategy requires. Diagnosing an organisation means scoring all seven elements, not just the hard, technical ones (strategy, structure, systems) but the soft ones (shared values, skills, style, staff) because low scores on the soft elements almost always explain why changes to the hard elements fail to stick.

It requires epistemic humility about complexity

Dave Snowden’s Cynefin framework makes a distinction that every marketing strategist should have tattooed somewhere useful: not all business problems belong in the same category. Some are Simple (cause-and-effect is obvious, best practice applies).

Some are Complicated (cause-and-effect requires expert analysis, but the right answer exists). Some are Complex (cause-and-effect only becomes visible in retrospect, and you must probe-sense-respond rather than plan-execute-measure).

Most marketing problems that are treated as Simple are actually Complex. A brand positioning problem is pretty complex to spot and evaluate. A creative fatigue issue, as well. An audience targeting challenge. These are not problems that any best practice solves automatically. They require safe-to-fail experiments; those small, reversible tests designed to surface patterns rather than confirm hypotheses. The Diagnosis Gap is, in part, a complexity problem: an industry that has trained itself to apply Complicated-domain solutions to problems that actually live in the Complex domain.

A week inside the mess

Let me show you what this looks like in practice.

Week one

The first thing I do is not look at any data. I interview people, so that I get the nuance, no AI can provide by simply attaching big data. Seven to nine stakeholders: the founder or general manager, the marketing lead, the performance team, the sales or customer success function, finance, and - critically - the external agency or freelancer if one exists.

The questions at this stage are designed to surface inconsistency, not consensus. What matters is not what each person says, but where the accounts diverge, because the divergences are where the diagnosis lives. Every senior diagnostic conversation at this stage follows a similar structure, but the exact questions adapt to what the organisation reveals. The answers are almost never consistent across stakeholders. These inconsistencies are the diagnosis.

Then I pull the full funnel data. Every channel. Last ninety to one hundred and eighty days, sometimes even farther in time. And I do something that sounds basic but is almost never done: I reconcile the platform numbers against the finance numbers. For example: Meta ROAS against backend revenue. Google Ads conversion volume against the CRM-recorded transactions. The reconciliation gap, the difference between what the platforms claim and what the business actually recorded, (and of course I’m not considering they use same attribution), is, in every single case, material. In some cases it is catastrophic.

Week two

I draw a map validated from established academic business models. Not yet a strategy map, but a causal map: how does spend translate into attention, attention into consideration, consideration into action, action into revenue, revenue into margin, margin into sustainable growth? I mark where the funnel leaks. I quantify the leakage at each stage. And I apply the constraint logic: of all the places where this system is underperforming, which single one, if fixed, would produce the greatest improvement in overall output?

I form a hypothesis. Then I go back to the stakeholders and ask whether the hypothesis is consistent with what they know. I am not looking for validation. I am looking for the stories that data cannot capture: the customer complaint that comes in every week but never makes it into a report, the product issue that the support team knows about but has never been escalated, the competitive shift that the sales team noticed but never surfaced to the marketing meeting.

Week three

I build the Transparency Stack: a reconciled, finance-backed view of the five to ten metrics that actually matter for this business. Not the twenty-seven KPIs in the dashboard. The five that connect directly to the constraint. The ones that will tell leadership, in real time, whether the intervention is working. Most important KPIs of them, are not numbers.

And I write the constraint’s name at the top of a page. Not “our creative is weak.” Not “our CAC is rising.” Something specific, causal, and actionable. What this looks like in practice:

The constraint is retargeting window mismatch. The product category has a fourteen-day average consideration period by this cohort. By the time a customer reaches purchase intent, they have not seen an ad in eleven days. The creative is fine. The targeting needs further investigation. But the window has been wrong since the account was set up.

The constraint is an informal channel abandonment. Two years ago, after a difficult quarter, the sales team stopped following up on leads from one particular source. The decision was never documented, and it simply became habit. Leads from that channel, which close analysis shows are the highest-intent ones in the pipeline, are being opened, skimmed, and left to expire. The ‘lead quality problem’ everyone complains about is a behaviour problem nobody has named.

The constraint is the brand–performance rift made structural: the creative team is briefing for premium perception, the performance team is optimising for impulse response, and both are running to the same audience, in the same week, with directly contradictory signals. The audience has learned not to trust either communication. The solution is not better creative. It is a sequencing decision that nobody has been given the authority to make and the technical skills to implement flawlessly.

Week four

I facilitate a leadership workshop which is a facilitated decision instead of a neurotic presentation. I bring three options, ranked by impact, effort, and risk. I am explicit about what each option requires: which current activities would need to stop, which resources would need to shift, which assumptions would need to be challenged. I do not recommend a single answer. I present the reasoning and let leadership choose.

Then I leave them with a 90-day plan. A backlog of interventions, ranked by their relationship to the constraint, with owners, timelines, and success metrics tied to the Transparency Stack. Not to platform dashboards.

But the deliverable is not the point. The deliverable is a document. What actually matters is what changes in the room.

In the multi-brand retailer engagement described above, the shift in campaign logic was the visible intervention. What took longer, and mattered more, was this: the conversation about agency performance stopped happening. The commercial team started attending the marketing review. The founder stopped asking “why is our ROAS low?” and started asking “what are we building for customers that our suppliers cannot offer them directly?” The vocabulary of the organisation shifted from reactive to diagnostic. That shift is what compounds. It is what a Stop-Doing List cannot capture, but what embedded diagnosis, at its best, always produces.

Three moves, not twenty

The diagnostic process, compressed to its essential structure, is always the same three moves regardless of the category, the channel mix, or the organisational complexity.

Establish measurement truth

Reconcile what the platforms claim against what finance recorded. Most organisations are making significant decisions based on numbers that are materially wrong. Until this reconciliation exists, until you have a finance-backed, constraint-anchored view of the five to ten metrics that actually drive this specific business, every other strategic discussion is built on sand.

Name and attack the single constraint

Of all the places where this system is underperforming, which one, if fixed, would produce the greatest improvement in overall output? And again; not the easiest one to fix, not the one the agency is most comfortable addressing, not the one that appears most prominently in the weekly report. But the one that is the core of a strategic decision, the one that actually sits between where this business is and where it is capable of being.

Install a learning loop, not a one-off project

The constraint rarely disappears after a single intervention. What changes is the organisation’s ability to see it clearly, name it precisely, and act on it before it compounds into a quarterly emergency. The embedded operator’s job is to install that capacity, and then, eventually, to make their own continued presence unnecessary.

Most brands have been attempting move three without ever completing move one. They run strategy workshops before establishing what is actually true about their data. They test new campaigns before identifying which constraint those campaigns are intended to move. The sequence is not bureaucracy. It is the reason some interventions compound and others evaporate.

Why data alone cannot close the gap

There is a deeper problem here that deserves naming.

The diagnosis gap is not ultimately a measurement problem. It is an epistemological one.

Rob Kitchin, one of the more rigorous thinkers on the limits of big data, makes the point that the accumulation of data does not in itself produce understanding. Data requires interpretation. Interpretation requires judgment. And judgment (as in the capacity to look at a field of information and determine what actually matters, what is signal and what is noise, what the pattern beneath the variance is telling you) is not a computational capability. It is a human one.

John Vervaeke at the University of Toronto calls this relevance realisation: the cognitive capacity that allows a mind to determine what is important in a given field of information. It is not just intelligence. It is the trained ability to see through data to structure, to look at a dashboard and understand not just what the numbers say, but what they are hiding, and what the organisation’s relationship to those numbers reveals about how it thinks.

This is what the Greek philosophical tradition called noēsis: the highest form of knowing, not accumulated from empirical observation alone, but arising from the integration of analysis, experience, and the kind of intuition that only comes from genuine immersion. Nous (mind, perception, direct knowing) not as pop spirituality mysticism, but as the practical intelligence that emerges when a person has spent enough time inside enough organisations to begin reading the patterns beneath the surface.

This is the problem noētico lab is built to address. It won’t sit comfortably at philosophical conceit. The name earns its etymology. Noēsis in practice is what develops after two decades in business and a decade inside the machine at Ogilvy, Mindshare, OMD, and the more surgical environments of specialist digital and e-commerce shops. Building the dashboards, running the attribution models, presenting in the quarterly reviews, and then leaving the agency world to embed directly: as CMO inside a luxury brand, as strategic consultant guiding a major gaming operator’s market entry, as growth partner inside a SaaS platform scaling internationally. Different categories, organisations, even continents. The same patterns appearing, every time, in every room where growth had stalled.

The practical intelligence that emerges from that accumulated immersion, the ability to look at a dashboard and understand not just what the numbers say, but what they are hiding, and what the organisation’s relationship to those numbers reveals about how it thinks. This is what noēsis means in practice. It is not a credential to display, but a capacity to deploy. And it is almost entirely absent from the current marketing services landscape, because the structures the people of marketing services operate within, prevent the immersion that makes it possible. And it’s sad how much intelligence, skills and ability is chocked under this automation.

This is merely an argument for a particular kind of human presence, with the right experience, the right method, and the right positioning, genuinely inside the organisation, trusted enough to hear the truth, and structured enough to convert what they see into decisions that change the system.

Who this is for - and who it is not

I want to be honest about something, because clarity here is more valuable than persuasion.

The embedded diagnostic model is not for everyone. It is not for companies that want campaigns run on their behalf as if it’s side hustle. It is not for companies that want a dashboard built and left. It is not for companies that believe their growth problem is a creative problem, a channel problem, or a spend problem, and are not open to the possibility that the constraint might sit somewhere more uncomfortable.

This model is for a specific kind of leader running a specific kind of company.

The company is founder-led, or led by its first real marketing hire. It scaled through performance marketing hustle (search, paid social, perhaps affiliates or marketplaces) and that approach worked until it stopped working. Revenue is real: seven figures or more, with a genuine marketing budget and the ambition to grow beyond the ceiling that performance marketing alone cannot break. The team is between ten and fifty people - large enough to have real organisational complexity, small enough that a single misdiagnosed constraint can consume months of capacity and budget.

The leader recognises, usually quietly, at the end of a quarterly review (or in the moment after the CFO asks the margin question) that they have been optimising something that was never the right thing to optimise. They are willing to give a diagnostic partner full access to their real data, their real conversations, their real organisational tensions, because they understand that diagnosis that works from partial, aggregated, obscured, noisy information is not diagnosis at all.

If you work for a company that has been running campaigns for the last eighteen months and cannot clearly answer: what is the single biggest constraint between where we are and where we want to be, that is the Diagnosis Gap. And it is the only question worth spending the next ninety days answering.

Three questions to ask yourself this Monday

Before the weekly or bimonthly performance review. Before the agency call. Before the slide deck gets opened.

One: What is the one number in our current dashboard that causes the most disagreement or confusion? Who is responsible for reconciling it with our finance data?

Two: If we could stop doing three marketing activities tomorrow, with no consequences, what would they be? And what does it tell us that we have not already stopped them?

Three: Where in our funnel do the most heated arguments erupt? Is the person responsible for that part of the system the person with the clearest view of what is causing it?

The answers to those three questions will not close the Diagnosis Gap. But they will tell you whether you have one. And if you do, if you find yourself reading a number that produces anxiety rather than clarity, or sitting in a meeting where a metric drop triggers a cascade of tactical responses before anyone has asked why, that is the signal worth paying attention to.

Growth is not a campaign problem, and it is not only about P&L. It is something much more than that: the longevity and wellbeing of an organisation, its ability to compound rather than merely survive. Growth has never been a campaign problem.

It is a diagnosis problem. And most brands have been buying the wrong solution for longer than they know.

noētico lab exists for leaders who are ready to close that gap from the inside. If this essay described the room you were in last Tuesday (or the Tuesday before that) a conversation starts here.

About the author

Manos Venieris is the founder of noētico lab, an embedded marketing & business consultancy built on the principle that the hardest and most valuable work in marketing is diagnostic, not executional. He spent a decade inside the machine at Ogilvy, Mindshare, OMD, and specialist digital and e-commerce agencies before embedding directly as CMO and growth consultant for companies across luxury, iGaming, and SaaS. He holds an MSc in Marketing Communication and International Marketing from AUEB, and has received the EACA award for communications effectiveness. He writes the noētico lab letter, a newsletter for leaders who suspect their dashboards are producing panic rather than understanding.

The noētico lab letter publishes whenever something important and helpful needs to be shared. Subscribe below if you want the next essay when it arrives.